Light waves emanate from a source in concentric shells, much like the rings spreading from a stone thrown into a pond. The shells grow larger and larger so that light from a distant source can be described as a flat wavefront. The image of a distant point source through a perfectly polished telescope is blurred somewhat by the finite extent of the aperture, so that a larger telescope makes a smaller point image. Large telescope therefore have better theoretical resolving power than smaller ones. A telescope that makes images that are as good as the theoretical resolution are said to be diffraction limited, because the resolution is limited by the refraction by the aperture.

However, if the lens is not perfectly polished, it has aberrations that limit the resolution further. These aberrations can be described as deviations from perfect flatness in the wavefront, and they smear the image.

Now, even if the lens is perfect, a telescope on earth still has to look through the earth's atmosphere. The temperature of the air varies with altitude but also in a more random way, by turbulence. The optical properties of air varies with the temperature, so turbulent air introduces aberrations of the same kind as an imperfectly polished telescope lens: the wavefront is not flat anymore and the telescope loses resolving power as compared to the diffraction limit. To make matters worse, the turbulence - and thereby the way the image is smeared - changes on a time scale of hundredths of a second.

In addition to having polished the optics of the telescope very carefully, we use a combination of three techniques to collect images with a minimum of smearing due to wavefront aberrations:

The most popular sensor for measuring the shape of the wavefront (a wavefront sensor) is the Shack-Hartmann sensor. It uses the fact that a tilt in the wavefront gives a translation of the image. By measuring local tilts from translations of images formed by subapertures over different parts of the full aperture, a surface shape can be constructed.

In order to quickly and accurately taking different shapes, a mirror has to be small and low-mass. We use a bimorph mirror, which has a substrate consisting of two pieco-electric layers with opposite polarities, so that when a voltage is applied to an electrode covering a part of the mirror, a bump appears. By combining bumps from a number of different electrodes, arbitrary shapes (to within the resolution of the mirror) can be created. Our mirror receives control signals from the sensor 1,000 times per second.

While AO techniques has been available for night-time astronomy since the late eighties, due to heavier computing requirements, it was developed later for solar astronomy. The first system was taken online at the Dunn Solar Telescope at Sacramento Peak in New Mexico in 1998 with our system following after a couple of months.

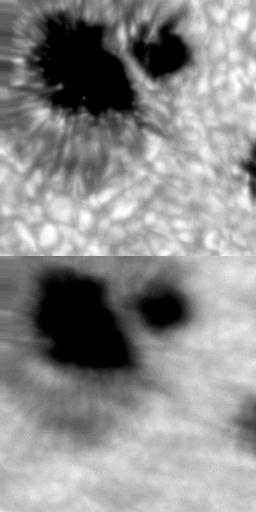

Because typical exposure times are much shorter for solar astronomy than for night time observations, the emphasis of our system is not to maintain a consistent correction but to make the moments of good seeing closer to perfect. The movie shown is from our old telescope and gives an idea of what the AO does for us. Note that while the quality varies in both tiles, it is more often good in the upper one.

Alternative to AO or taking care of residual errors. Not same thing

as image enhancement, which is just a matter of making images more

pleasing to the eye or better suited for presentation. We want to

remove errors and restore images to what they were before they got

scrambled by the seeing aberrations.

Once we have the point spread function, i.e. what a point-like star

would look like with the same errors, we can reverse the scrambling

process and restore the image of the solar surface, recovering what

the sun really looks like.

There are different methods for doing this. We use a technique

called "phase diversity", where simultaneous image pairs taken in- and

out-of-focus are combined to make a restored image. The in-focus image

has as the most information about the object (the solar surface) while

the out-of-focus image has more information about the aberrations. For

best results, several image pairs collected close in time (so the sun

does not have time to change) are used. The aberrations are estimated

from the data, which gives a the point spread function we need to undo

the effects of the aberrations. The restoration process corrects for

both the effects from the seeing, as well as (partly) for the effects

of the finite aperture of the telescope.

Here is an example of a restored image from the Nature data set.

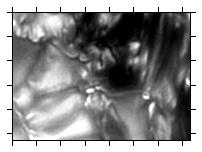

The following four image pairs are the in-focus and out-of-focus

images used to make the restored image. 1 arcsec tick-marks.

Image restoration

Restored image

Observed image pair 1.

Observed image pair 2.

Observed image pair 3.

Observed image pair 4.

Time-stamp: <2013-04-19 11:09:31 mats>